Yes, there are free websites that can detect deepfakes for you. Tools like Deepware Scanner, Hive Moderation, Reality Defender, Sensity AI, and DFDetect analyze videos, images, and audio files and tell you whether they are real or AI-generated. No technical skills required. Just upload and get your answer within seconds.

But before we get into the tools, you need to understand what you’re actually dealing with because deepfakes have gotten terrifyingly good in 2026.

A deepfake is AI-generated or AI-manipulated media, a video, image, or audio clip where a real person’s face, voice, or identity has been convincingly replaced with something fake. The term comes from “deep learning” + “fake.”

In simple terms, it is a lie wrapped in a realistic human face.

The technology behind it uses something called Generative Adversarial Networks (GANs), a type of AI system where two neural networks compete against each other. One generates the fake, the other tries to detect it. Over time, the generator gets so good that even the detector struggles to catch it. And so do human eyes.

This is not science fiction. This is happening right now on social media, in boardrooms, in political campaigns, and even in your WhatsApp messages.

Here is where things get uncomfortable. The numbers around deepfakes in 2025 and 2026 are not just alarming; they are a wake-up call.

That last number hits the hardest. If 99.9% of people cannot reliably spot a deepfake with their own eyes, you need tools to do it for you. That is exactly what this article is about.

Before jumping into the websites, it helps to understand what these tools are actually doing under the hood. Deepfake detection is not magic; it is pattern recognition at a forensic level.

Here is what these tools analyze when you upload a file:

Facial and Visual Analysis

Audio Analysis

Metadata and File Structure

Behavioral and Motion Analysis

Modern detection platforms run all of these checks simultaneously and return a confidence score telling you not just whether something is fake, but how fake it is and where the manipulation occurred.

Here is your go-to list. Each of these tools is accessible without a heavy technical setup, and most offer free tiers that work well for individuals and small teams.

Best for: Video analysis

Deepware is one of the most well-known free deepfake scanners available online. It was built specifically to detect manipulated video content using neural networks trained on large datasets of real and synthetic media.

Using Deepware is as simple as it gets. You visit the website, paste a public video URL or upload a file directly from your device, and hit Scan. The platform processes the footage frame by frame and typically delivers a result in under two minutes. What you get back is a clear risk score along with a visual breakdown of which specific frames triggered the detection, so you are not just told something is fake; you can see exactly where the manipulation appears.

What it detects: Face swaps, lip-sync manipulation, and general video deepfakes.

| Feature | Details |

|---|---|

| Media Types | Video |

| Free Tier | Yes |

| Sign-up Required | No |

| Result Type | Risk score + frame breakdown |

| Speed | Under 2 minutes |

Best for: Images, video, and audio in one place

Hive Moderation is a professional-grade AI content moderation platform that includes a powerful deepfake detection module. It handles images, videos, and audio files, making it one of the most versatile free options available.

What makes Hive stand out is its real-time detection capability. It does not just flag content; it gives you confidence percentages broken down by media element, so you know exactly which part of the file is suspicious.

Getting started with Hive requires no account; just head to the demo tool on their website, drop in your file or paste a URL, and the system takes over from there. Within seconds, it returns a percentage-based authenticity score broken down by element, meaning you can see whether the issue is in the video frames, the audio track, or both. This level of granularity makes it genuinely useful for anyone who needs to understand not just whether something is fake, but how it was faked.

What it detects: AI-generated images, deepfake videos, and voice-cloned audio.

| Feature | Details |

|---|---|

| Media Types | Image, Video, Audio |

| Free Tier | Yes (demo available) |

| Sign-up Required | Optional |

| Result Type | Confidence percentage per element |

| Speed | Seconds to under 1 minute |

Best for: Multi-modal detection with high accuracy

Reality Defender is one of the most credible names in deepfake detection right now. Gartner recently recognized it as a leading deepfake detection company, and it offers free access with up to 50 audio or image scans per month through its API and web app.

The platform uses an ensemble of AI models running simultaneously, not just one algorithm. This layered approach means it catches deepfakes that single-model tools would miss.

Reality Defender requires a free account to get started, which takes under a minute to set up. Once inside, you are met with a clean drag-and-drop interface where you upload your image or audio file. The platform then runs it through its ensemble of models and returns a color-coded manipulation probability score, green for authentic, red for manipulated, with shades in between reflecting the confidence level. For anything serious, you can download a full PDF report that documents the findings in a format suitable for sharing with a legal team or HR department.

What it detects: Deepfake images, cloned audio, and AI-manipulated media across formats.

| Feature | Details |

|---|---|

| Media Types | Image, Audio |

| Free Tier | Yes (50 scans/month) |

| Sign-up Required | Yes |

| Result Type | Color-coded probability + PDF report |

| Speed | Near real-time |

Best for: Multi-layer forensic analysis

Sensity AI is where detection gets serious. It was originally built for enterprise clients, government agencies, media organizations, and law enforcement, but it provides a free demo tier that works well for individual use.

What separates Sensity from the others is its multi-layer forensic approach. It does not just look at the face. It analyzes visuals, file structure, metadata, acoustic patterns, and behavioral cues all at once. The result is a detailed report that can actually be used as evidence, the kind that holds up in legal or corporate investigations.

Accessing Sensity requires signing up for a demo account, after which you land inside their detection hub, a professional interface built for serious analysis. You upload your file or paste a public URL, and the system runs it through every layer of its detection engine simultaneously. Rather than a simple pass or fail, what comes back is a structured forensic report complete with confidence scores, visual indicators highlighting the exact areas of concern, and metadata insights. This is the kind of output you would bring to a boardroom or a courtroom, not just a browser tab.

What it detects: Deepfake videos, synthetic images, cloned audio, and AI-manipulated media across all formats.

| Feature | Details |

|---|---|

| Media Types | Image, Video, Audio |

| Free Tier | Yes (demo access) |

| Sign-up Required | Yes |

| Result Type | Full forensic report with confidence scores |

| Speed | A few seconds |

Best for: Quick image checks with per-face scoring

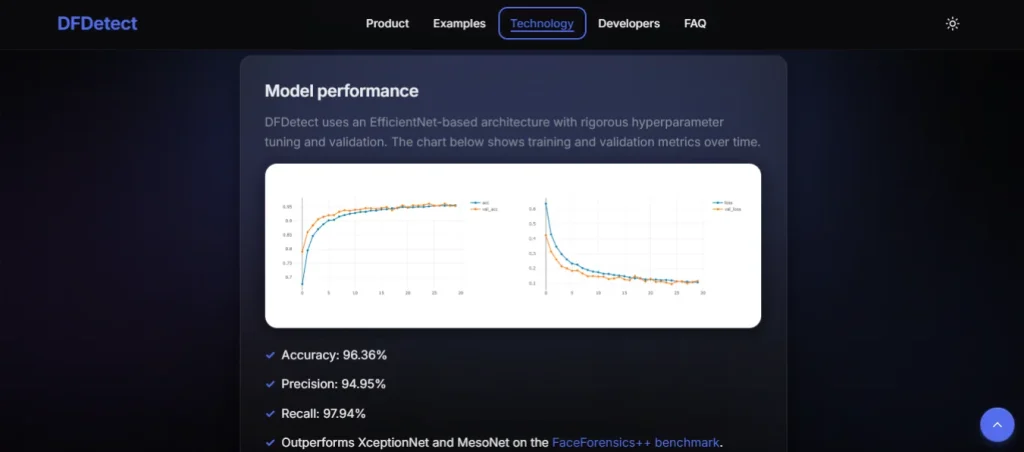

DFDetect requires zero setup and zero sign-up. You land on the page, drag your image onto the upload area, and within a few seconds, the tool returns an authenticity score for every face it finds in the image. If there are multiple people in the photo, each one gets its own score independently. The simplicity here is intentional — it is built for people who need a fast answer, not a deep analysis session. It is particularly useful when you receive a profile picture, a headshot from a job applicant, or an image in a news article and want to quickly verify if the face is real or AI-generated.

What it detects: AI-generated faces and manipulated images.

| Feature | Details |

|---|---|

| Media Types | Image |

| Free Tier | Yes |

| Sign-up Required | No |

| Result Type | Per-face authenticity score |

| Speed | Seconds |

| Website | Best For | Media Types | Free | Sign-up |

|---|---|---|---|---|

| Deepware Scanner | Video deepfakes | Video | ✅ | ❌ |

| Hive Moderation | All-in-one detection | Image, Video, Audio | ✅ | Optional |

| Reality Defender | High-accuracy multi-modal | Image, Audio | ✅ | ✅ |

| Sensity AI | Forensic-level analysis | Image, Video, Audio | ✅ | ✅ |

| DFDetect | Quick image checks | Image | ✅ | ❌ |

Tools are your best defense, but training your eye does not hurt either. Here are the most reliable visual tells to watch for when reviewing suspicious media manually:

Keep in mind: in 2026, even trained professionals miss high-quality deepfakes. Manual detection alone is not reliable. Use the tools above as your primary defense.

“I received a suspicious video message from someone I know.” Upload it to Deepware Scanner or Hive Moderation immediately. Do not share it further until verified. If confirmed to be fake, report it to the platform it came from and notify the person being impersonated.

“A job applicant’s video interview looked slightly off.” Run the recording through Sensity AI or Reality Defender. Deepfake-assisted identity fraud in hiring has become a documented problem in 2024, with over 300 companies unknowingly hiring impostors linked to foreign government operations.

“I saw a viral video of a public figure saying something shocking.” Before sharing or reacting, upload it to one of the tools above. Politicians were impersonated in 56 separate deepfake incidents in just the first quarter of 2025 alone.

“Someone is using my face in a video I never recorded.” This is a growing threat. Document everything, use Sensity AI to generate a forensic report, and contact a legal professional. The US passed the TAKE IT DOWN Act in May 2025, which mandates platforms to remove non-consensual deepfake content.

The deepfake detection market is not slowing down. It is projected to grow from $5.5 billion in 2023 to $15.7 billion by 2026, a 42% annual growth rate driven by governments, enterprises, and media organizations investing in protection.

Some of the most promising developments happening right now:

The arms race between deepfake generators and detectors is very real. Every time detection improves, generation adapts. But the tools available today are far more capable than most people realize, and using them takes less than two minutes.

Deepfakes are no longer a distant threat that only affects celebrities, politicians, or large corporations. They are showing up in everyday life in job interviews, video calls, social media feeds, and even private messages. And the technology behind them is only getting sharper.

The good news is that you do not have to be a cybersecurity expert to protect yourself. The five websites covered in this article put powerful detection technology directly in your hands, for free, with no technical background required. Whether you are verifying a suspicious video, checking a profile picture, or investigating a viral clip before you share it, there is a tool here that can give you a clear answer in minutes.

The most dangerous thing you can do right now is assume you will be able to spot a deepfake on your own. The data proves otherwise. Make it a habit to verify before you trust, share, or act on any media that carries weight. Your reputation, your finances, and sometimes your safety can depend on it.

Stay skeptical. Stay protected.

No. Most leading tools reach 85–98% accuracy in controlled conditions, but high-quality modern deepfakes can reduce that. Using multiple tools for important decisions is always the smarter approach.

Yes. Hive Moderation, Reality Defender, and Sensity AI all include audio deepfake detection alongside video and image analysis.

Reputable platforms like Reality Defender and Sensity AI have privacy policies in place. For highly sensitive content, check each platform’s data handling policy before uploading, or use API-based tools that process content without storing it.

Most tools return results within seconds to two minutes, depending on file size and media type.

Report it to the platform where it appeared, notify the person being impersonated, document everything with screenshots or forensic reports, and contact authorities if fraud or criminal activity is involved.

It depends on the use. Non-consensual deepfake content, especially explicit material, is illegal across 46 US states and increasingly regulated in Europe under the EU AI Act. Malicious impersonation for fraud is criminal worldwide.

Yes. All five websites listed above are browser-based and work on mobile without needing to install any app.

Mavensum explores the internet’s hidden gems, uncovering unique websites, trends, and ideas that inspire curiosity and discovery across travel, gaming, tech, and more.

© 2026 Mavensum. All rights reserved

Comments are off for this post.